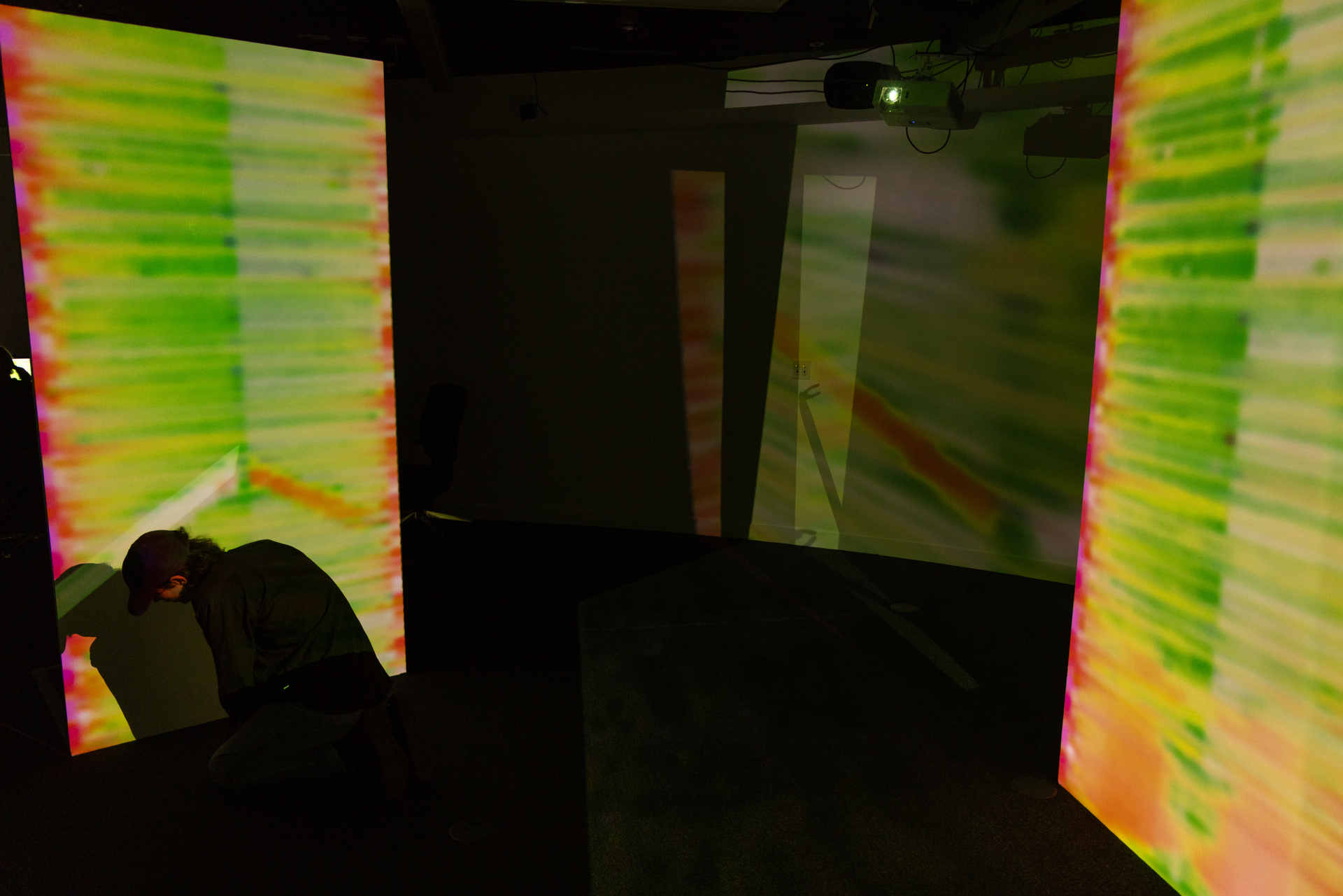

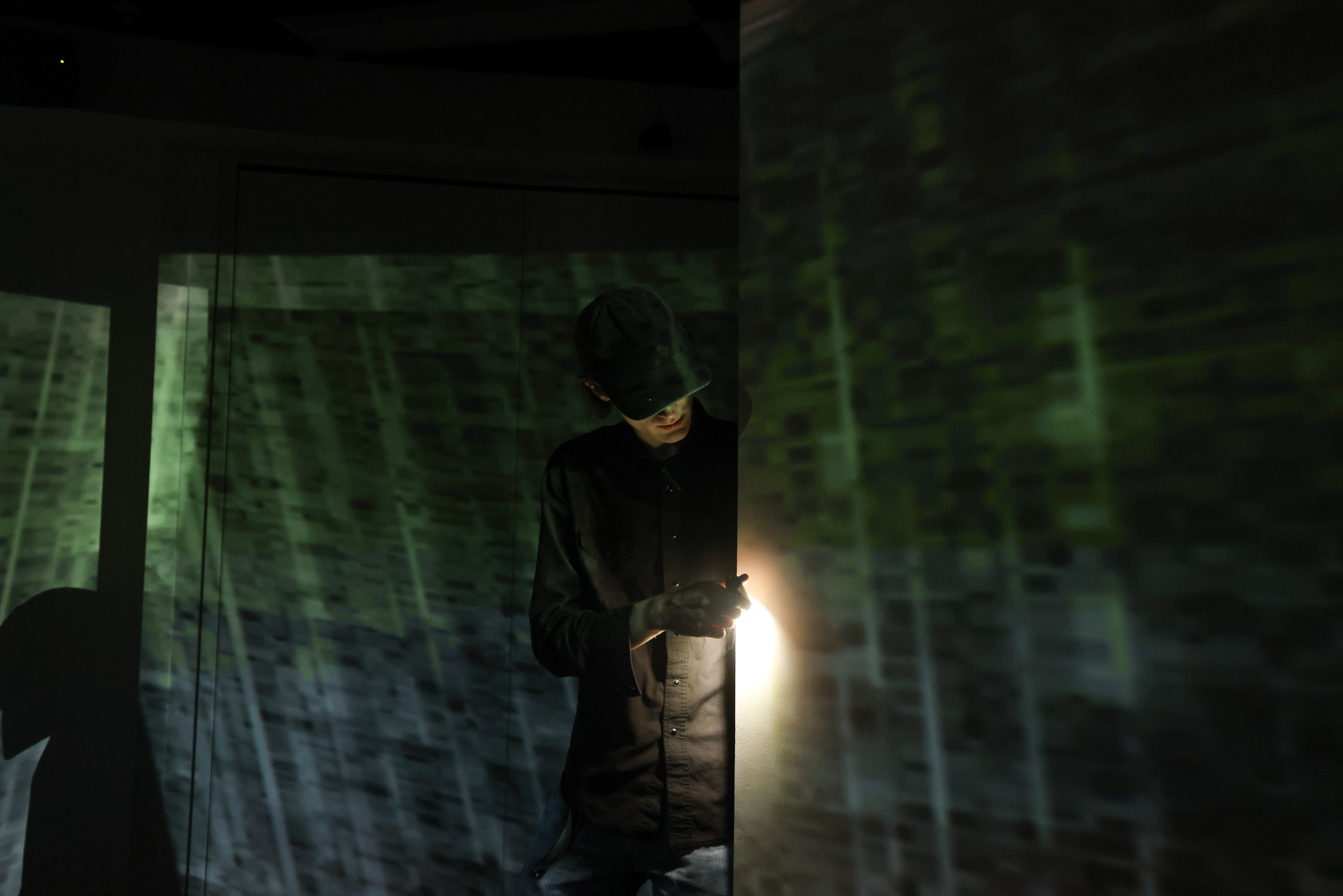

Threshold Systems is an immersive installation exploring the transient and evolving relationship between art and technology, foregrounding investigative technical processes. It examines moments of interaction and disruption as thresholds for change within a responsive system. The viewer’s presence activates the work, positioning them not as observer but as subject, immersed in light and leaving a digital imprint. The installation exposes a shifting boundary between the individual and the system itself.

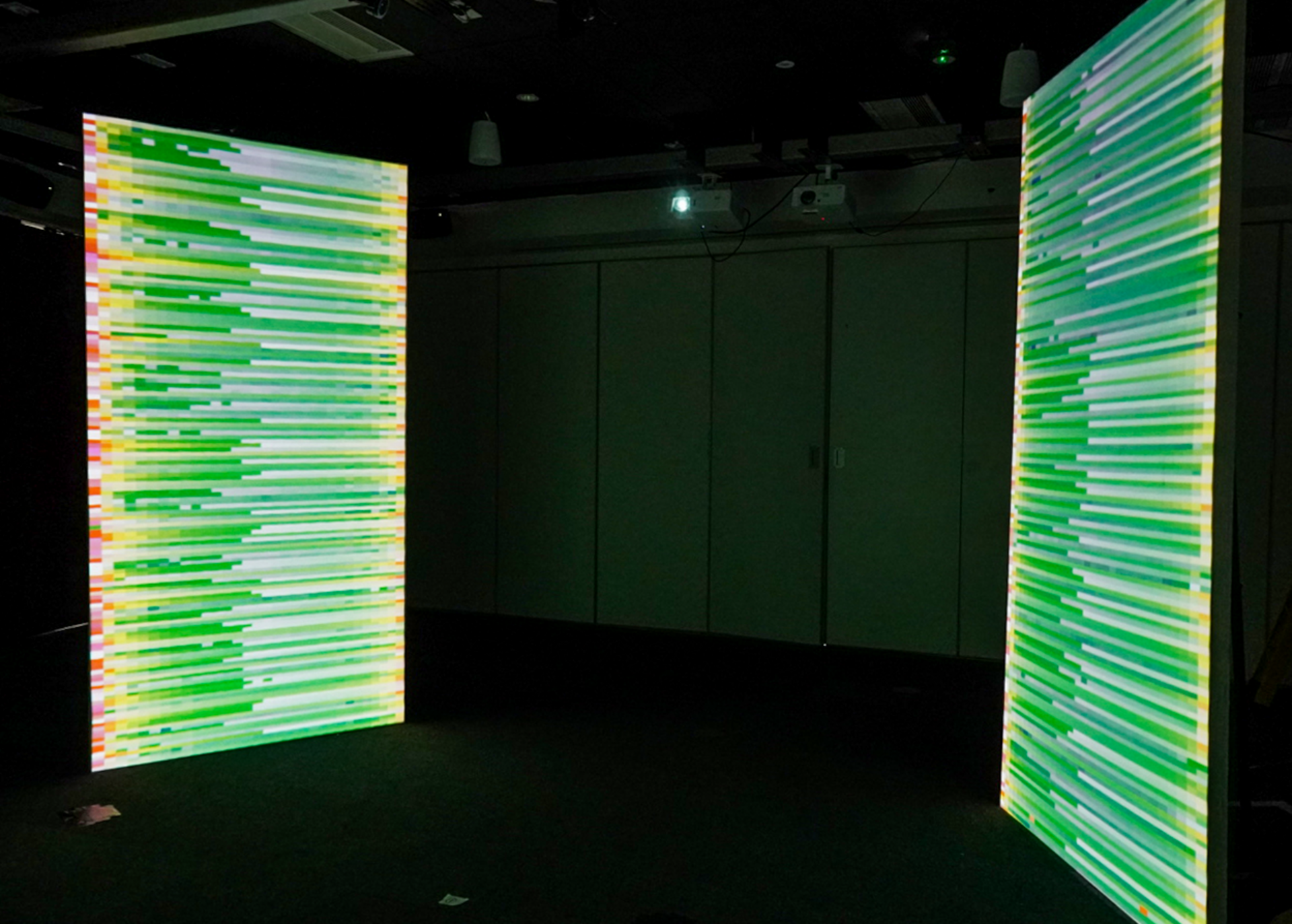

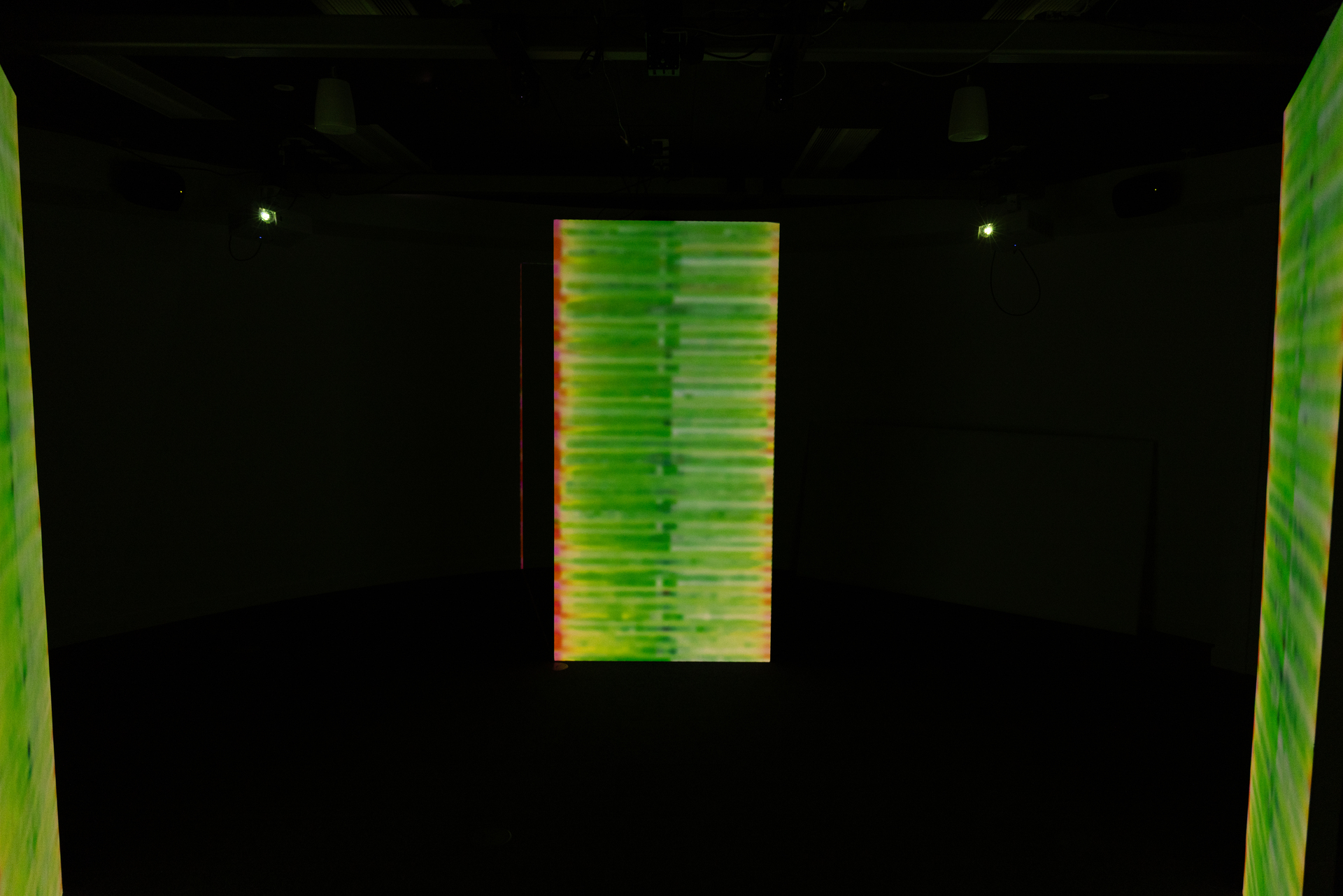

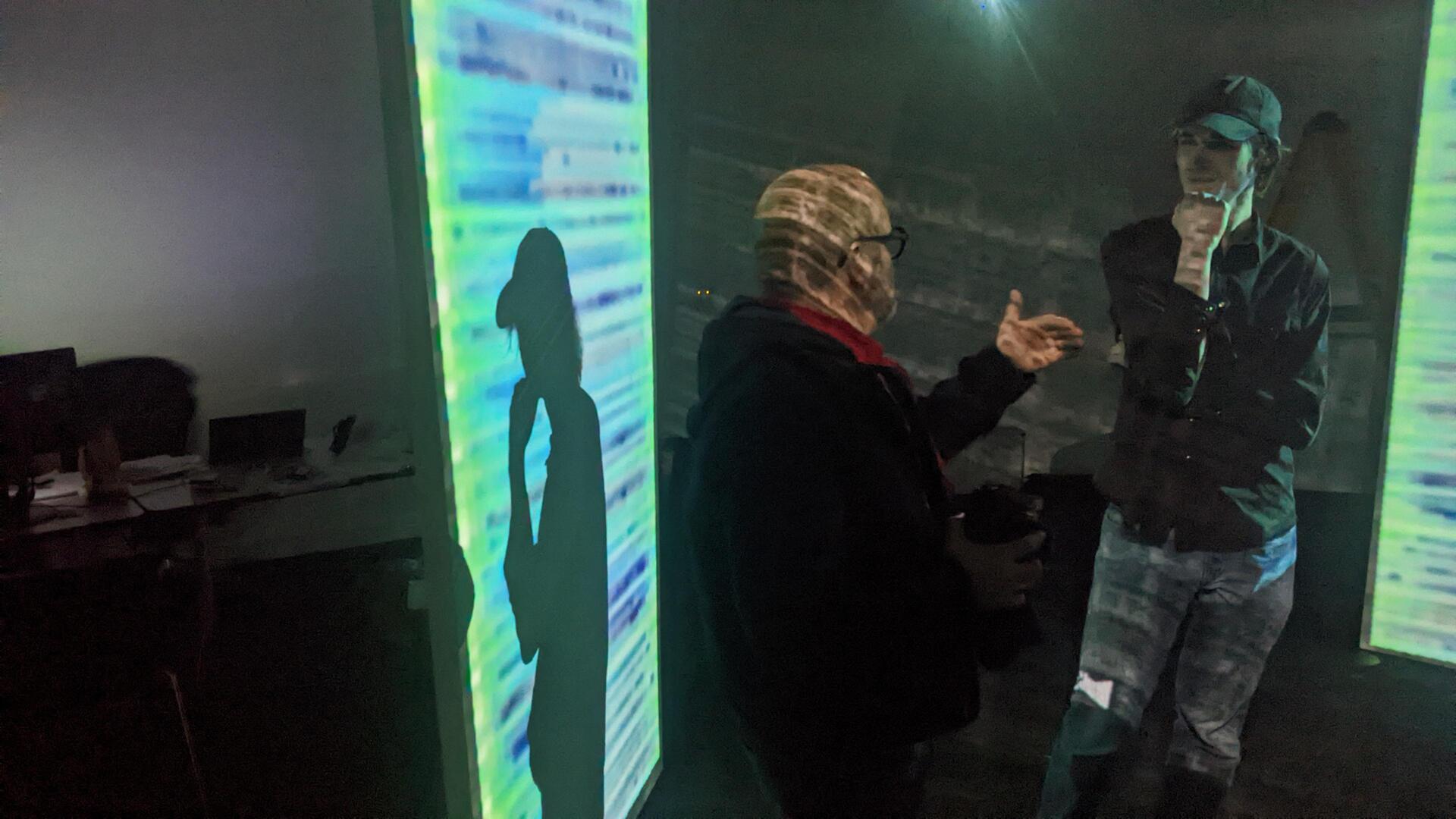

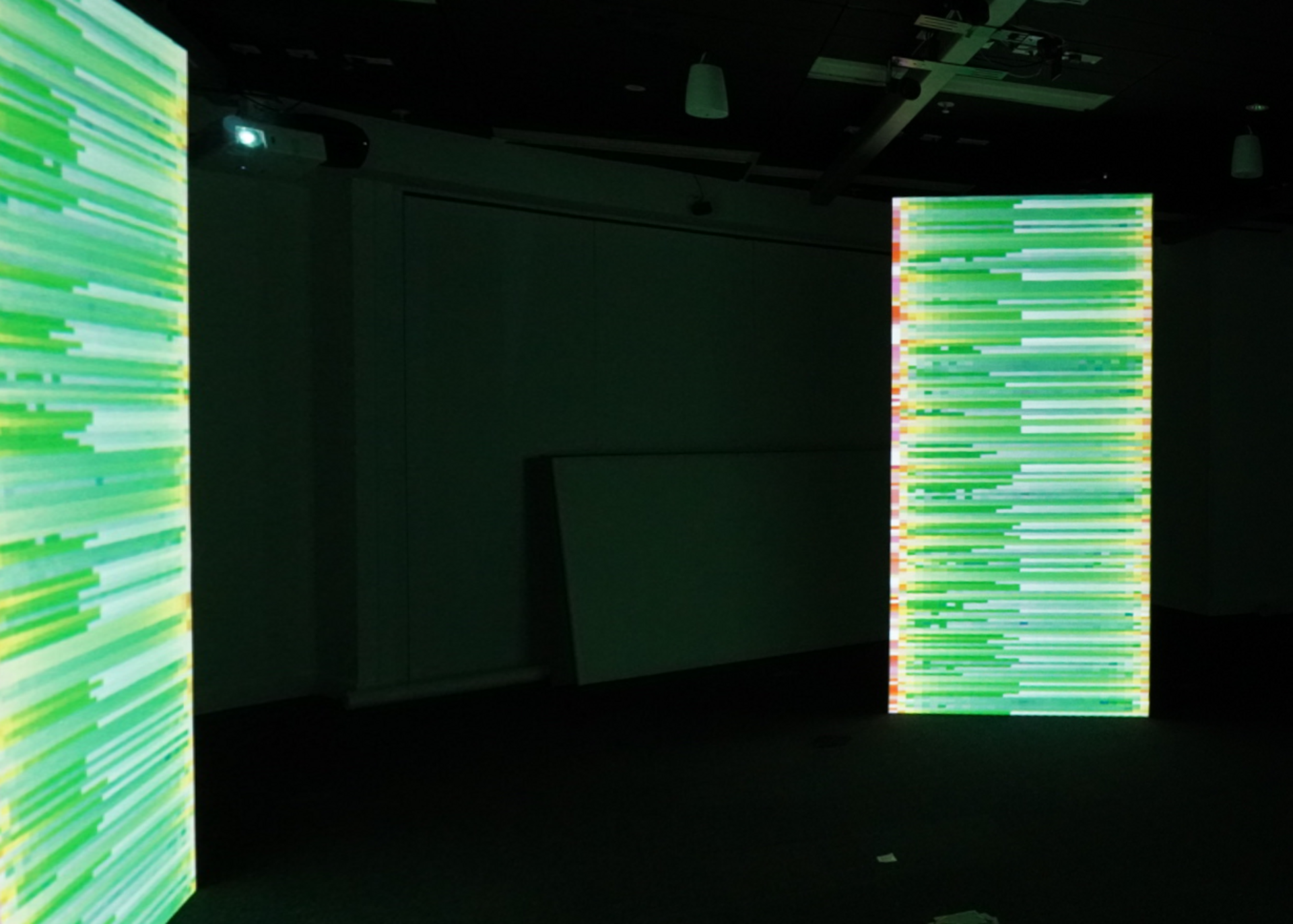

Autonomous processes translate person-driven interaction into system response. Within an auditory automated data feedback loop, human presence, monitored through shadow, triggers visual shifts in the pixelated colour and movement. Participants become unauthorised authors of the work, their actions absorbed and reinterpreted by a system whose logic remains partially obscured. Questions of control and agency remain unresolved. The exhibition comprises three freestanding obelisks, each presenting projection-mapped, pixel-based visuals. Operating as interconnected channels, each structure responds to its own distinct soundscape.

These soundscapes, composed of subtle, near-indistinguishable tones, act as an automated, generative force, delicately shaping the visual output in real time. Layered together, they form a baseline cognition of the system, ready to be disrupted by human presence. The translation from sound to image remains unstable and unpredictable, creating a delicate balance between machine-driven processes and audience interaction.

(Development video, 40 seconds)

The pixel, as the smallest visible unit of the digital image, offers a way to understand this system at a human scale. Individually minimal yet structurally essential, pixels accumulate to form larger images, reflecting how presence and perception gain meaning through relation and repetition. In this way, Threshold Systems situates the viewer at the boundary between self and system, where agency is continuously negotiated rather than resolved.

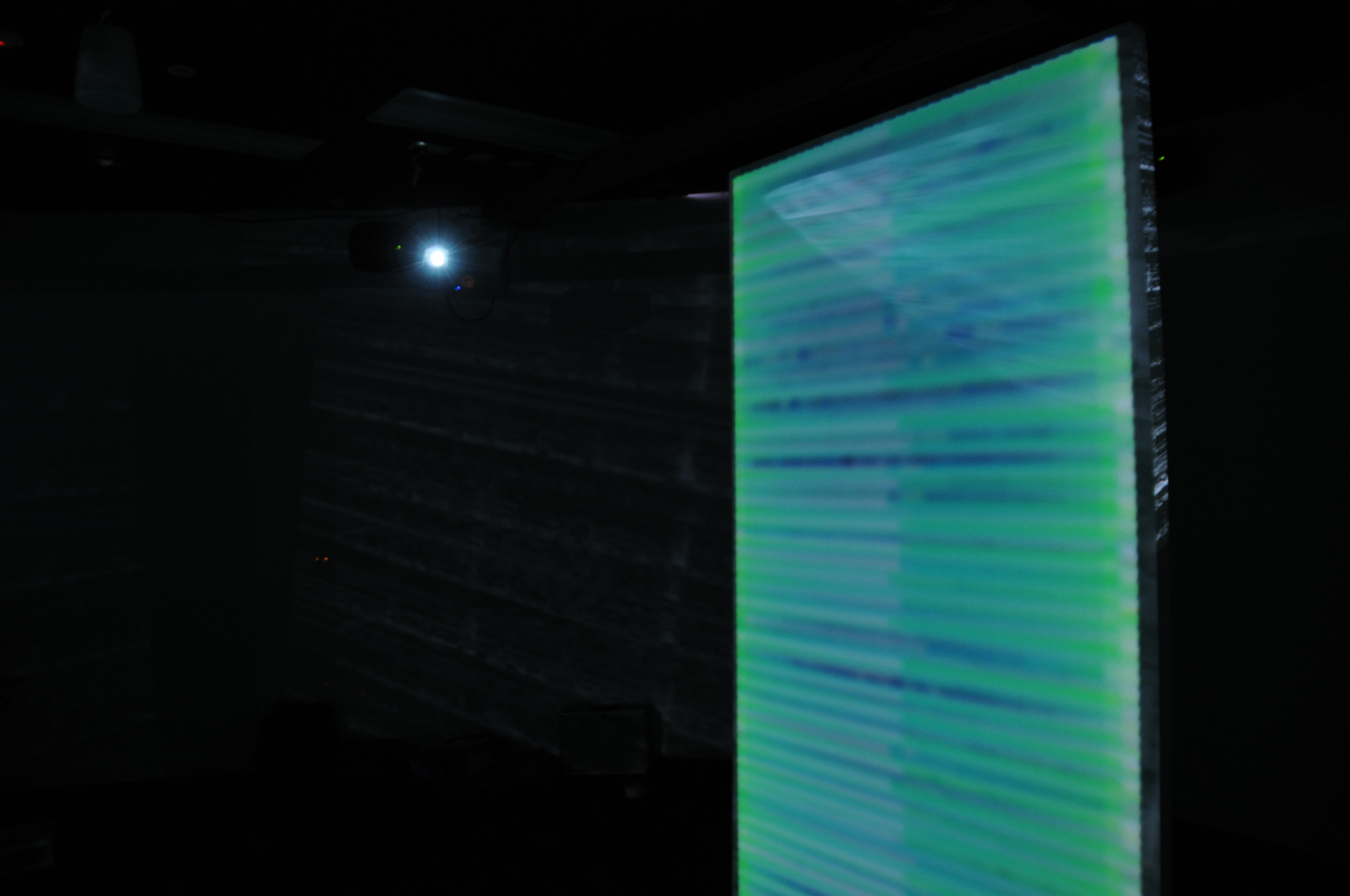

This developed into the idea that some novel kind of visual interaction with the sound could take place, allowing one to step into the sound and play. Many forms of visual data, like QR Codes or binary images, proved unintuitive before spectrograms where considered; allowing an axis of both pitch and time, both intuitive concepts without knowledge or appreciation of audio encoding.

The project was conceived in 2023, on a purely technical level, from the idea that audio might be somehow "transmitted" over some visuals, that the initial information could be wholly recovered with no loss and no cheating.

(Origional proof-of-concept, 7 minutes)